Another problem is they ruined their own search with AI.

Kicked themselves right in the nuts.

Googling is for advertisers…

Younger generations are using other platforms to gather information.

What’s not being talked about here is that young people don’t seem to give a damn if the information they research is accurate or not, it’s whether or not it’s peddled by their preferred streamer. Those “other platforms” are apparently Tiktok and Netflix, not exactly places known for speaking truth to power.

I’ve spent twenty years trying to believe that the children will be the saviors of the future, but I think maybe the conservatives actually succeeded in murdering education in it’s crib. I am now nearly fully on team “You know, maybe these kids actually are a bunch of dumb fucks who won’t save us after all.”

"I’ve heard of RFK Jr. and he says vaccines are bad. He’s more famous than scientists, so I believe him for exposing their corruption. "

I can’t wait for humanity to go extinct.

It’s not so much that they don’t give a damn, but that they can’t tell. I taught some basic English courses with a research component (most students in their first college semester), and I’d drag them to the library each semester for a boring day on how to generate topics, how to discern scholarly sources, then use databases like EBSCO or JSTOR to find articles to support arguments in the essays they’d be writing for the next couple years. Inevitably, I’d get back papers with so-and-so’s blog cited, PraegerU, Wikipedia, or Google’s own search results. Here’s where a lot of the problem lies: discerning sources, and knowing how to use syntax in searches, which is itself becoming irrelevant on Google etc. but NOT academic databases. So why take the time to give the “and” and “or” and “after: 1980” and “type: peer-reviewed” when you can just write a natural-language question into a search engine and get an answer right away that seems legit in the snippet? I’d argue the tech is the problem because it encourages a certain type of inquiry and quick answers that are plausible, but more often than not, lacking in any credibility.

Is it the tech? Or is it media literacy?

I’ve messed around with AI on a lark, but would never dream of using it on anything important. I feel like it’s pretty common knowledge that AI will just make shit up if it wants to, so even when I’m just playing around with it I take everything it says with a heavy grain of salt.

I think ease of use is definitely a component of it, but in reading your message I can’t help but wonder if the problem instead lies in critical engagement. Can they read something and actively discern whether the source is to be trusted? Or are they simply reading what is put in front of them then turning around to you and saying “well, this is what the magic box says. I don’t know what to tell you.”.

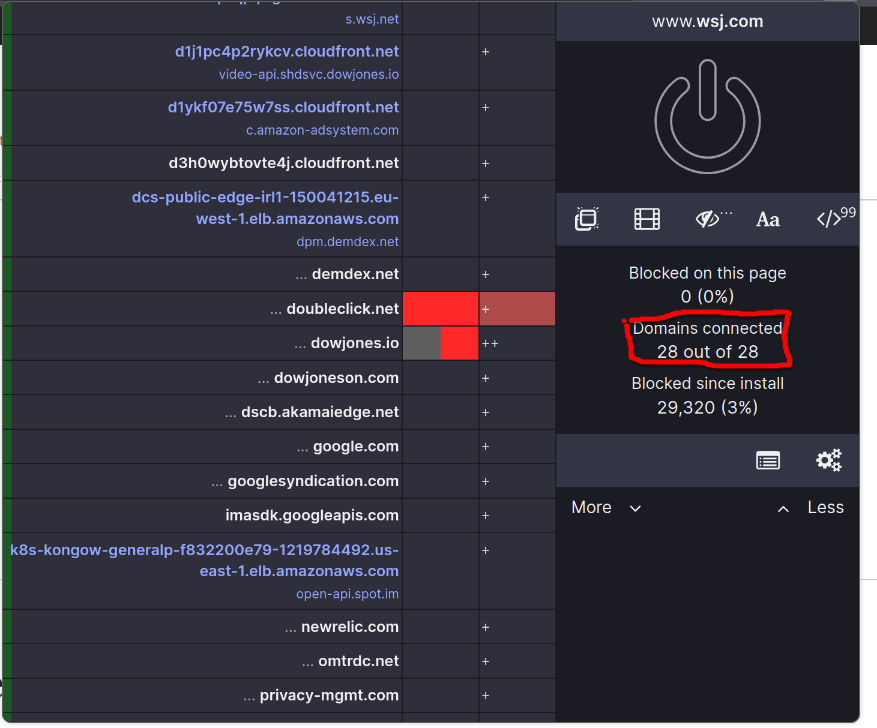

Can’t read this article thanks to shitty paywall. Yet it has 28 trackers even tho it just need pure HTML

Edit: thank you for archive link OP!

The second threat is the rise of “answer engines” like Perplexity which, well, do what they say on the tin. OpenAI has added internet search to ChatGPT, Meta Platforms is exploring building its own search engine, and even AI chatbots that can’t search the internet are proving increasingly capable at addressing many questions. They’re also becoming ever more widespread, as Microsoft and Appleintegrate them directly into the operating systems of all the devices they make or support.

That is not an improvement, it’s just also not really any worse.

I don’t even know why people use Google anymore besides maps and restaurant data. The rest is all SEO corporate junk.

I search directly on medical and research / studies sites now. The rest I use AI.